The more you depend on technology and automation, the more vulnerable you are when things don’t work as planned.

In this post I wanted to cover a few tactics that can help you right the ship when things go sideways.

1. When troubleshooting campaigns…

When you build a campaign you should test it thoroughly, of course.

But even with regular testing from time-to-time you’ll find yourself trying to unravel why things didn’t play out the way your expected, and one of the best pieces of advice I can give for this scenario is to test using clean contact records.

What I mean is that most contacts have a history; a record of the things they’ve done in the past, and what upcoming automations are scheduled for them – but when you’re trying to test a specific part of a specific campaign it can be tricky to pinpoint where things aren’t working if you have other variables in the equation.

So, the advice here is to isolate by create a new contact – one who has never been in your application before, and that way you’ve got a blank slate to work with.

Pro-tip for Gmail Users

Did you know that Gmail allows you to quickly and easily create unlimited test addresses? It does.

If your normal email is hello@monkeypodmarketing.com then all you need to do is add a + after the word hello, and add anything before the @ symbol. (Example: hello+test@monkeypodmarketing.com)

As a pro-pro-tip you could also include the test number, or the actual time that the test contact was created (that can help if you’re testing and there’s a delay of any kind). So, for example a test created at 3:45 pm might be hello+345@monkeypodmarketing.com.

Just remember to periodically clean up dummy contacts you’ve created during testing.

2. When troubleshooting Ecommerce…

Use an incognito window, or private browsing tab.

This one might sound obvious but I can’t count the number of times I’ve seen this simple trick resolve an otherwise befuddling scenario.

Here’s why: Infusionsoft’s ecommerce components are trying to help simplify things for buyers – it’s trying to make their lives easier by remembering who they are, who their affiliate was, what products they wanted, and what promo codes they’ve used; and most of the time that’s what we want as well.

But, this can create unexpected behavior when we’re testing, updating, adding/removing promotions, and re-testing; so for the sake of your own sanity train yourself and your team to always use private browsing tabs when you’re testing your order forms or shopping cart.

3. When troubleshooting Emails…

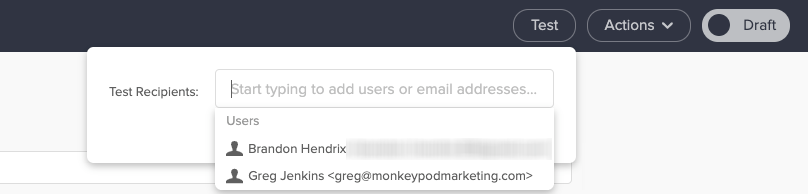

Emails are Infusionsoft’s bread and butter, but one gotcha I regularly see trip people up is that when you use the test function from within the email it sends that test to the user, not to an actual contact.

Did you catch that? The built in test option sends using the details available on the user record, not the details from a contact record.

You might be wondering why that matters – and the answer is this: User Records don’t have custom fields.

So, if your email is using any custom field information in the email body (or merged into links) then testing the email to a user will lead you to think it’s not working.

To replicate the experience an actual contact would have you should test your emails by sending to a contact record (use an email other than the one tied to your user record).

4. Assumptions will ruin us

I know, you’re smart. I get it. Heck, I like to think I’m pretty clever too.

But assumptions will ruin us when it comes to troubleshooting, because if we assume something is working we’ll skip right over it, and that leads us into sketchy territory because now we’re building off of something we think is true, but might not actually be.

The reason I included this tip was because it’s burned me before, in a big way.

To make a long story short: I tested a campaign and it worked the way I wanted it to – then when I added a bunch of contacts to the campaign it didn’t do what we expected.

I assumed that what worked for individual test contacts would also be true when we loaded the campaign up en masse; and it wasn’t.

Want the longer story?

So, this stems back to when I was working with the African Leadership University. (Btw, have you seen that case study?)

The basic idea was that we were sending out the notifications to let prospective students know whether they had been admitted, declined, or waitlisted.

But we had to build a relatively complex campaign because we had to deliver those three messages in either English, French, or Portugese, depending on the language that prospect had denoted during their application.

So, we had a series of decision diamonds – first segmenting by language, and then segmenting by their actual admission decision – and when I tested this campaign I did so by adding individual test records.

The first one was an admitted student with French as their language – it worked.

Then a declined student with English as their language – worked again.

I ran four or five tests and when I was satisfied with the results we decided it was time to add the contacts.

If you haven’t guessed by now, well, it didn’t go very well.

The decision diamonds went haywire and the contacts we added were incorrectly sent all three of the emails (admitted, declined, and waitlisted).

They all got the correct email – but they also got two others, and they had no way of knowing which one was accurate.

For some of these students this email was the single most important piece of news they’d ever received – and we botched it.

I was crushed.

As it turns out the decision diamonds had been corrupted during the process of cloning different campaign structures – and testing it one-by-one had worked just fine, but adding hundreds of contacts at the same time somehow exacerbated the issue.

We did our best to mitigate the impact – we quickly (and carefully) reached out to students to explain what had happened, and to let them know which decision was correct.

The issue was caused by a technical glitch – and my recourse is limited when it comes to fixing bugs in the software; but I still kick myself because I think might have been able to avoid substantial pain if I had thought to test with a batch of contacts, rather than individuals.

Most the of ALU project went much more smoothly than this, if you haven’t seen the whole case study it’s worth a watch.

This was one of the most painful experience of my automation career – but the lesson was this: Assumptions will ruin us.

Just because it looks good on desktop doesn’t mean it’ll look good on mobile.

Just because it works in gmail doesn’t mean it’ll work in outlook.

Just because it worked for an individual doesn’t mean it’ll work for a group.

Just because it created the outcome you expected doesn’t mean did what you expected.

5. Arm yourself with examples

If you’re troubleshooting something and it’s not making sense, at a certain point you’re probably going to end up liaising with Infusionsoft’s support team.

There are plenty of tips for talking to technical support, heck, I’ve even got two blog posts on it (one annnnd two) – but the most important piece of advice I have is to find yourself a screencapture software you like and get in the habit of using it.

I use and recommend Loom, but another popular option is Soapbox (from the fine folks at Wistia).

Infusionsoft’s support team genuinely wants to help you – but if they can’t replicate an issue then it’s infinitely more challenging.

(Sorta like when you take your car to the mechanic and the noise it was making suddenly stops…)

So, if you know you’re gearing up for a convo with support, do yourself a favor by firing up your screencapture software and recording examples of the behavior you’re seeing (or not seeing).

This is helpful for a few reasons, but primarily because it reduces the opportunity for something to get lost in translation – the longer it takes for the support rep to understand the issue, the more frustration you’re likely to experience. And being able to shortcut that process helps get everyone on the same page more rapidly.

The second benefit is that sometimes by recording the test you’re running it forces you to think differently, or helps you notice something you wouldn’t otherwise have seen; personally I’ve stumbled across more than one solution simply by trying to document the issue.

My sincerest hope is that these tips will save you some headache the next time you find yourself troubleshooting some misbehaving automation.

If you’ve got your own tips that have worked for you, or a painful automation story, please feel free to share in the comments below!

#6 Join a group like Monkeypod Grove for additional help and brain power when steps 1 to 5 didn’t work out and you’re still stumped.

Thanks Steve! Very true – know where to turn when you need a little more firepower.

Here are a couple of things that I’ve found help me:

1. When I’m testing a campaign in the first place (especially if there are Decision Diamonds or different states/conditions that contacts could be in with different actions taken as a result), I’ll often create a spreadsheet that breaks out all those possible conditions/actions.

Then as I test each condition/action, I’ll fill out the spreadsheet with details like (a) “confirmed to be working on 2019-06-05” or (b) a note such as “It’s intentional that such and such happens here instead of this or that because xyz”.

I can’t tell you how many times this type of documentation has helped me LATER, when I’m digging back into a campaign that’s not doing what it’s supposed to be doing (or what I LATER think it’s supposed to be doing).

2. I also make LOTS of notes within my campaigns (e.g., using notes on the canvas or within sequences), as well as in other documents. Again, that documentation helps me get back up to speed on what the initial plan and thinking was behind the campaign, because we all know how quickly we can forget what we worked on in the past.

Hope that helps! Thanks for your tips, Greg!

Grant

Excellent suggestions – love both of those, thanks for sharing Grant!

i agree with the NOTES!

1. copy the html for the pretty templates from greg’s post: https://www.monkeypodmarketing.com/infusionsoft-campaign-note-templates/

2. get TEXTEXPANDER and load all the code in there for your favorite notes

3. use em all over your campaigns!

i have a nice big bold linky one for loom links to explain my campaigns “;loom”, a green one for regular notes “;note”, and a ridiculous bright red one for stuff that needs my attention or to finish later “;finish”

just like this: https://www.loom.com/share/1f19735d24c74271bd7c77706bec1b32

This is excellent! Dug the video – thanks for sharing.

Hey Greg, This is all great advice. Been using Loom and applying your multi-count Gmail trick for some time and they are really great suggestions. Thanks!

Terry

Thanks Terry! Glad you found it useful. Appreciate you taking the time to leave a comment. 🙂

Nice to have options!